What is DevOps and Why You Should Have It

An approach? A tool? A role? Few questions in the world have a variety of answers, and “What is DevOps?” is one of them. Let’s finally answer the question and also learn why using DevOps will lead to faster product delivery and higher quality.

This article covers the idea underlying the DevOps approach and the benefits of integrating it into the software development process. It also describes the most popular practices and DevOps tools, along with some recommendations on using them. You’ll also find information on different ways to acquire DevOps certification.

After reading this article, you will be able to draw your own conclusions about DevOps. And if you have any additional questions, you can always contact us.

What DevOps is, and Why It Matters

We prefer to divide DevOps into three levels of abstraction: philosophy, practices, and tools.

The cornerstone of DevOps is its philosophy: to create an agile and scalable system by involving both operational and development engineers in all stages of product creation, from idea to release.

To integrate this philosophy into the workflow, you use certain practices — CI/CD, automation, infrastructure as code, microservice architecture, etc. We’ll talk about them in detail a bit later.

And, finally, there is a bunch of tools to implement those practices: Docker, Jenkins, CircleCI and much, much more.

The point is, DevOps doesn’t start with tools or practices. The only right way to apply the approach is to understand the idea behind it, and only then choose instruments to help you bring that idea to life.

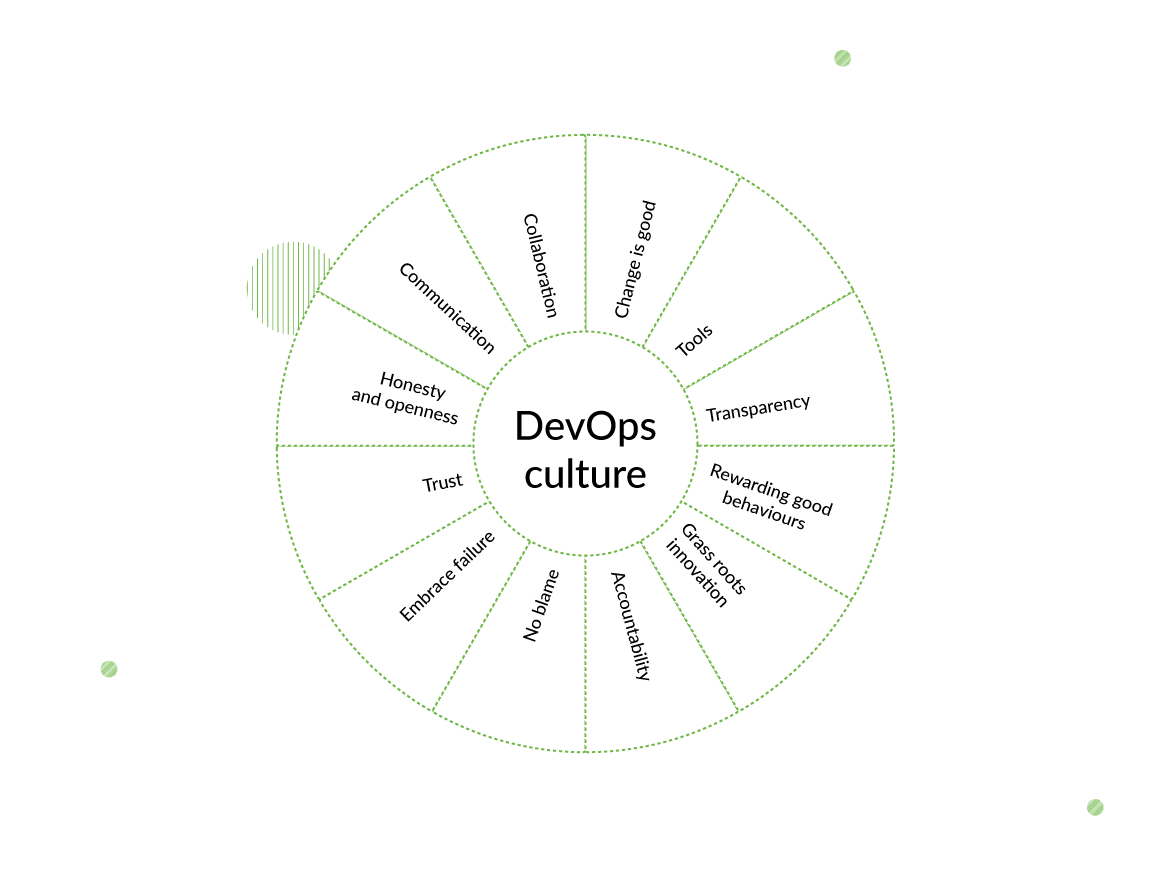

What does DevOps philosophy mean for your company? It means that the operation team’s task no longer begins and ends with setting up and supporting the basic infrastructure, and not caring about the products at all (as was the case for a long time). And, of course, the development team’s task no longer ends at pure creation.

Now both sides continuously work together as a united DevOps team, improving development and delivery, making them as efficient as they can be, sharing responsibilities and having a deep understanding of each other’s processes.

Applied thoughtfully, it results in a huge productivity boost at all stages — from fixing a minor bug to implementing a whole new module, from processing users’ feedback to reacting to last-minute changes.

Taking into account that, today, the whole industry is all about agile product development (and all of us have seen its advantages in action), integrating DevOps is a perfect way to deeply support agile values, as it allows us to deliver software frequently by introducing minor iterable improvements.

And that’s exactly what we’re talking about when we say “agile”!

By the way, since we’re experts in Python/Django development, we get asked a lot about Django-DevOps. We would like to tell you that the purpose of this repository is to provide a set of software tools that will help you create and deploy Django projects.

But we are talking a little about this now, so let’s get back to the advantages and disadvantages of the DevOps approach.

The Advantages of the DevOps Approach

In business, every decision should be cost-effective, and DevOps integration requires a lot of resources. In some cases, however, it just isn’t worth it – for example, if you’re making an MVP. However, if, from the very beginning, you know your product is going to grow and change, here is the list of benefits you’ll get from DevOps.

Smooth collaboration

This is the foundation of the approach, and also its biggest benefit. Well-established communication between teams and team members saves a lot of time. And, as clichèd as it may sound, time is money.

It also avoids a lot of frazzled nerves, because the less time spent on waiting, the more focused and motivated the team stays. Another benefit here is reducing the “bus factor” — the situation when all of the team members who possess certain knowledge are unavailable.

Scalability

If DevOps practices are implemented from the very beginning, the opportunity for expansion is built into the infrastructure and architecture. And when a lot of processes are automated (like testing), you won’t spend more time maintaining an expanded system (in comparison to the previous version).

Flexibility

Products built using the DevOps approach consist of small, independent and easily configurable modules (microservices), which developers can quickly replace, change, or add whenever they need to.

Infrastructure, in the DevOps model, is also agile and can be easily configured at any moment.

Flexibility is also manifested in quick reactions to users’ feedback or sudden issues.

Reliability

Ideally, the process is set up in such a way that you always have a last working copy of the product, you immediately receive a report about any issue, minor or major, and release/deployment processes are completely automated. So, in the event of a disaster, you can instantly perform recovery or rollback and have the system up and running in no time.

Speed

All the benefits above can be boiled down to one: no matter what you are doing — adding some new features, switching environments, fixing bugs, etc. — you can do it much faster using the DevOps approach than you can without it. And the more complex and evolving system you have, the more you need DevOps.

Main DevOps Practices and How We Use Them

Enough of abstractions! Let’s discuss some specifics, like what you should do to receive those benefits. There are many DevOps practices, and talking about all of them would require a book, so we want to share with you the ones we use in our company.

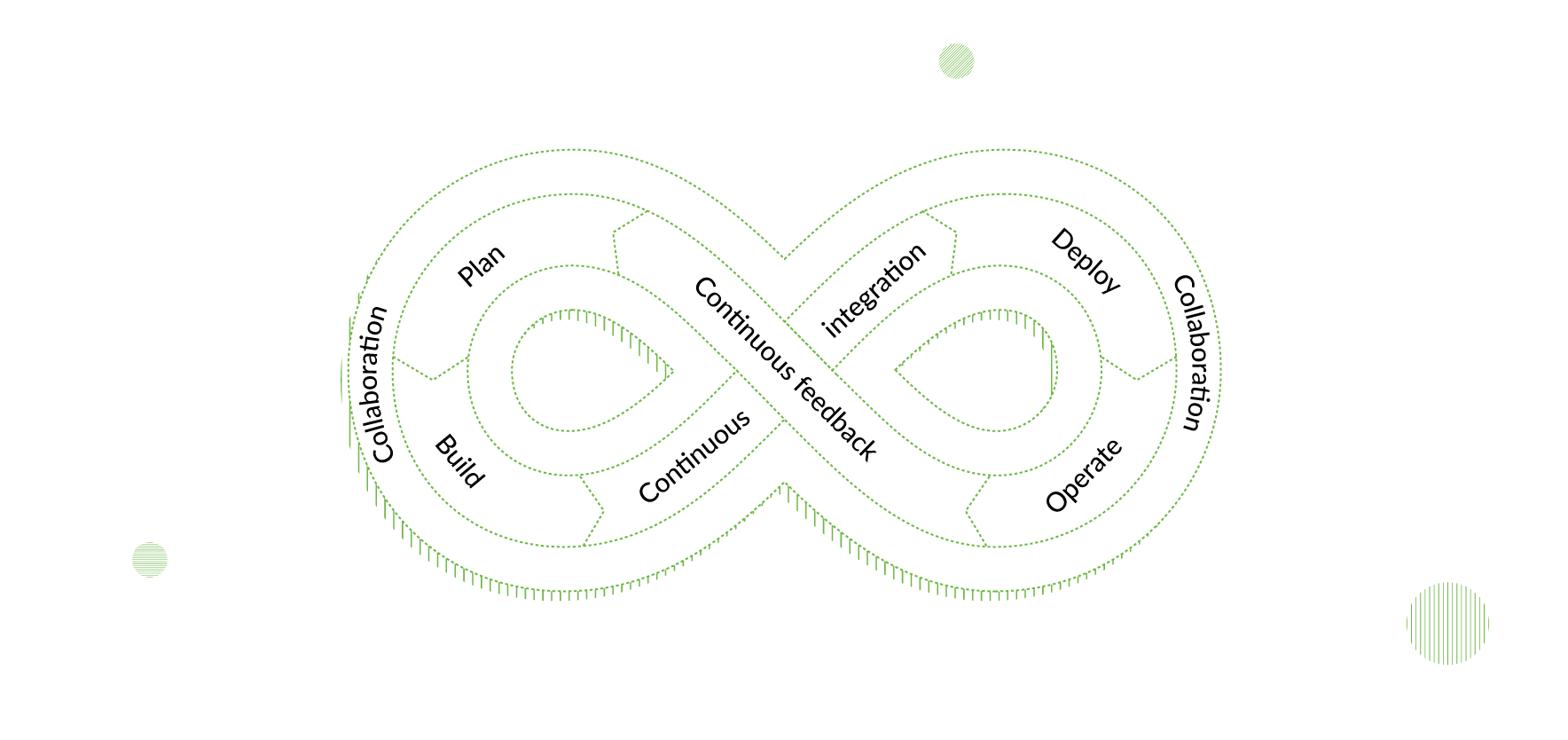

Continuous integration and continuous delivery (CI/CD)

Continuous integration means that developers regularly merge their code changes into the main branch, and each merge is followed by automated running and testing.

In other words, after finishing the task, the developer saves the changes into the working project copy. Then the tool used for CI detects the changes and runs the project. If there is any issue, the developer receives a notification with its description, or just confirmation of a successful run, in case no error occurred.

Therefore, continuous integration helps to detect and resolve conflicts and errors at the earliest stage possible.

Continuous delivery, in turn, stands for the regular, automatically performed deployment of a new version into a production environment.

Implementation of CI/CD provides a high level of automation at all stages, reduces the human factor and allows the team to concentrate on creation, thus minimizing the time and effort needed for manual operational work. It results in faster development and, consequently, more frequent and less risky releases.

Infrastructure as code

Traditionally, information on infrastructure configuration was stored in one of two places: the memory of system administrator or some machine (from where it could be read only by a system administrator). But a person can get sick, and a machine can be stolen or broken. That leaves us with a huge bus factor, which we have already mentioned before.

It seems clear that we need to store this kind of information in such a way that any team member can read and change it, so that it won’t depend on the vulnerability of an expert or hardware.

Infrastructure as code practice implies that system resources and infrastructure settings are defined via machine-processed definition files, which allows the DevOps developer to configure them as program code. Those files can be stored in the same version control system, where code is stored, so they can also be reviewed and reverted. Moreover, changes in configuration files are automatically deployed into the production environment.

Microservices

Microservice architecture implies that the application is divided into small parts, each built around one elementary function. They can even be written in different programming languages, use different frameworks, and operate under different operating systems!

Microservices are easier to test, maintain and reuse. The dependencies between them are reduced to a minimum, so adding a new one requires no change in the existing parts. However, this approach can’t work without thoughtfully designed testing.

A project built on microservices is always ready for growth and significant changes.

Configuration management

This practice requires detailed recording and updating of information, describing hardware and software (released updates, versions, environment settings, etc.). Simply put, the company always possesses up-to-date detailed information for all of its resources.

Those records provide great insurance in case any major issues arise, because you can always perform a rollback and recover any configuration version. Sometimes, when a server is down for one second, it can lead to a million-dollar loss, so configuration management gives the system high reliability.

Monitoring and logging

No test can provide information the same degree of reliability and volume as actual data from actual users. Gathering, categorizing, and analyzing logs lets the team detect issues, come up with new features and improvements, and continuously make products and processes better.

Metrics like “average time on page” or “average age of user” can help you understand more about your audience and what changes you should make to increase sales or simplify the UX.

Automation

Essentially, the whole DevOps approach involves the complete removal of extra manual work. Version control, testing, deployment — if anything can be automated, you automate it. It may require some resources at the beginning, but will give you a noticeable head start in the near future. If your goal is to create a truly scalable and flexible system, automation is the best investment you can imagine.

DevOps Tools We Use

As we’ve mentioned, for every practice, there is a set of tools you can use to apply it. Here is the DevOps tools list our software development team uses daily and why we recommend them:

- Docker for containerization

Containerization allows developers to create software independently of the environment in which it is going to run. The software is later packed in standardized units (containers) that can be deployed in any environment.

For sure, Docker is not the only instrument for this, but it is the most popular one.

If it’s hard for you to decide which tool to use in your project, this comparison table can help you:

| CircleCI | Jenkins | |

| Requirements | cloud-based, doesn’t require a server | requires a dedicated server |

| Support of custom configurations | basic | full |

| Entry threshold | low | above average |

| Cost | has paid functionality | free |

CircleCI is better for small projects where the main goal is to implement CI/CD as soon as possible.

If you have a big project that requires a lot of custom system settings, Jenkins is your best choice. With Jenkins, you can configure almost anything, but, of course, it will take time.

- Sentry for real-time error tracking

We use Sentry to collect, group, prioritize, and analyze error reports and fix errors in real-time. It shows the steps that led to the error and also provides information on when the issue occurred for the first time.

Terraform is just a tool for integrating “infrastructure as code” practice. Using it, we can deploy whole systems in just one click. All we need to do is to describe the infrastructure once, and then we can set up as many environments as we want for different purposes. With Terraform, you also can see all the real-time configurations of your system.

As a web development company, we need to perform server deployment quite frequently, and doing that manually would require a dedicated team! Ansible helps us automate this process by executing our scripts.

- Nagios and New Relic for monitoring

These tools collect statistics and logs, group them, and support convenient search and formatting. They can also present the collected data as charts, graphs, or tables, which is priceless when it comes to analyzing metrics.

Check out our CI comparison and Docker tutorial on our blog.

DevOps Certification

DevOps certification usually confirms knowledge of a specific tool or set of tools.

AWS Certified DevOps Engineer

The AWS Certified DevOps Engineer is a professional exam for engineers with 2 or more years of experience with the AWS environment. It validates their abilities in implementation, automation, and management of CD, monitoring and logging, security controls, compliance validation, etc.

The exam lasts for 170 minutes and costs 300 USD.

The official page provides a list of documents for preparation, and you can also sign up for offline courses. More information can be found on the exam guide.

Azure DevOps Engineer Expert

Azure DevOps Engineer Expert measures skills in designing DevOps strategy, implementation of DevOps development processes, CI/CD, application infrastructure, dependency management, and continuous feedback.

Taking the exam requires previous certification as an Azure Administrator Associate or Azure Developer Associate.

The price varies depending on the country.

Microsoft offers free self-paced preparation, and there are also courses available.

Exams for specific tools

It’s important to remember that DevOps is not a tool or technology, but an approach supported by tools and technologies. And learning how to use the instruments can be a good way to get the idea.

For that purpose, there are a lot of exams for specific tools, like Docker, Jenkins, Ansible, etc.

Yes, just learning Docker won’t make you a great DevOps engineer – but understanding the philosophy, and being able to follow it using tools like Docker, definitely will!

Conclusion

The DevOps approach is based on deep involvement of both the operational and development teams during all steps of product creation. It may take some effort to integrate DevOps in your process, but eventually it will help you to create an agile and scalable system that’s ready for rapid change and growth.

If you have questions about DevOps and how you might apply it to your project, feel free to fill out the contact form. We’ll do our best to help you.

- Is DevOps coding or noncoding?

- We prefer to divide DevOps into three levels of abstraction: philosophy, practices, and tools. And while interacting with development teams may require DevOps professionals to have certain coding skills, their role is more operational and management-focused rather than coding-focused.

- Can you have DevOps without agile?

- DevOps and Agile are two different methodologies that can be used together but are not dependent on each other. However, taking into account that, today, the whole industry is all about agile product development (and all of us have seen its advantages in action), integrating DevOps is a perfect way to deeply support agile values.

- Is there a difference between DevOps and cloud DevOps?

- Yes, these two terms differ in meaning. DevOps is a set of practices focused on streamlining the software development process and promoting collaboration between developers and IT operations professionals. Сloud DevOps focuses on using DevOps practices to deploy and manage applications in cloud-based environments such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform.

- What capabilities should a DevOps environment possess?

- A DevOps environment should provide capabilities to facilitate a successful software development and delivery pipeline. For example, in Django Stars, we use the following DevOps practices:

- Continuous integration and continuous delivery (CI/CD)

- Infrastructure as code (IaC)

- Microservices

- Configuration management

- Monitoring and logging

- Automation

- Are the services of DevOps engineers more expensive than software developers?

- The cost of DevOps engineers may be higher than that of software developers, but the value they provide in optimizing the software development process can be significant. To evaluate whether DevOps integration will be cost-effective for your business, contact Django Stars.